SEO

Without relevant user traffic, publishers will always struggle to monetize their content. Paid traffic is not sustainable as a long-term strategy. That’s why it is imperative for publishers to be up to date and proactive in their SEO efforts.

Explore with us best practices in technical and content SEO, learn how to utilize various tools, discover new tech, and read practical tips and advice from publisher SEO experts.

-

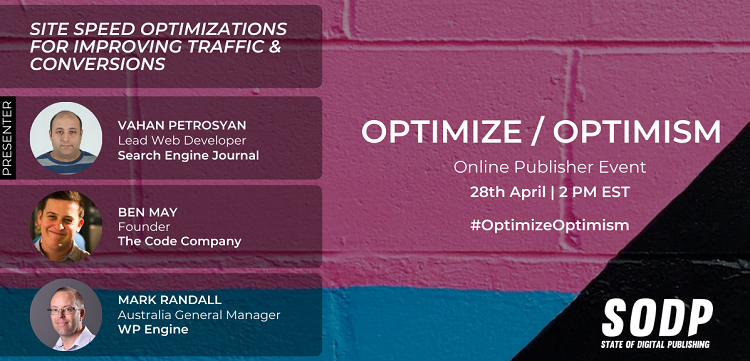

Site Speed Optimization for Improving Traffic and Conversions

30% of the world’s publishers are on Word Press. However, sites also…

-

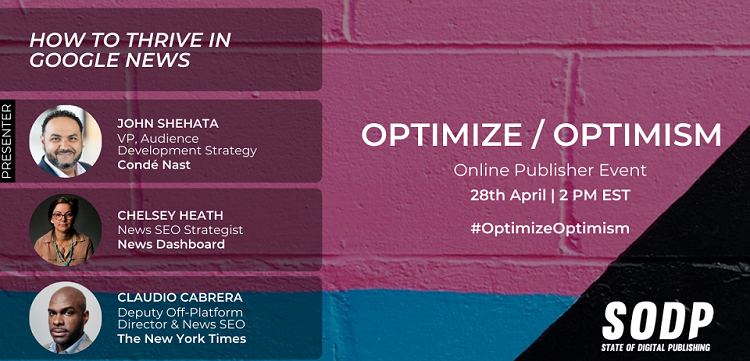

How to Thrive in Google News

Parsley has recorded last year that Google News and Search accounts for…

-

Digital Newsroom Ops – Covering Live Events

SODP’s Founder, Vahe Arabian, joined the speaker board at the Digital Newsroom…

-

Martin Splitt – Google

Martin Splitt is a developer advocate at the Google Search Relations team in…

-

Google News Original Reporting Winners and Losers

This past September, Google released a change in the way they evaluate…

-

Ultimate guide to voice search SEO [Publishers Edition]

There was a time when speaking commands or questions to a machine…

-

Podcast SEO: how to help users discover your podcast

Podcast discovery: optimizing for search engines and podcast apps and directories There…

-

Using category and tag pages for SEO [Publisher Edition]

A taxonomy system is an information architecture tool to organize any given…

-

Google AI Experiments: Helping You Appreciate Marketing Automation

The release of Google AI Experiments as of a few days ago, is…